For years, the AI narrative was dominated by “bigger is better.” Trillion-parameter models running on massive server farms were the only game in town. But in 2026, the pendulum has swung. The most exciting developments in AI aren’t happening in the cloud—they’re happening right in your pocket.

The Efficiency Revolution

Small Language Models (SLMs), typically under 7 billion parameters, have reached a tipping point. Thanks to techniques like 4-bit quantization and specialized NPU (Neural Processing Unit) optimization, models that used to require a data center can now run locally on a laptop or even a high-end smartphone.

Why Edge AI Matters

- Privacy: Your data never leaves your device. This is critical for medical, legal, and personal assistant applications.

- Latency: No network round-trips. Interactions are instant, making real-time voice and video agents feel truly conversational.

- Cost: Running a model locally costs electricity, not API credits. For always-on agents, this changes the economic equation entirely.

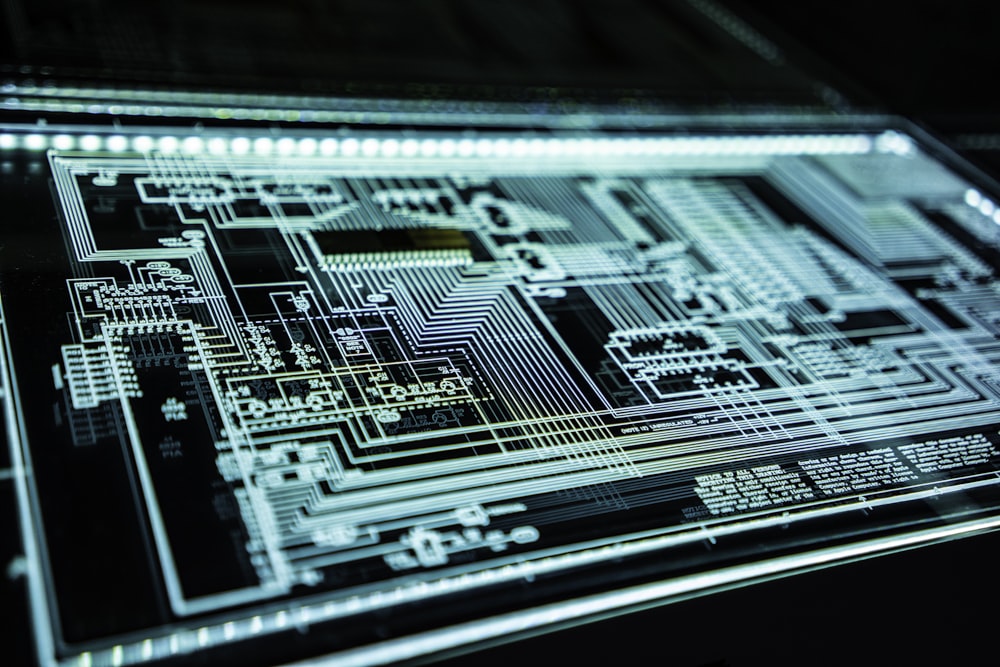

The Hardware Ecosystem

Hardware manufacturers are all-in. Apple’s latest M-series chips and NVIDIA’s consumer cards are now marketed specifically for their AI inference capabilities. We are seeing devices shipping with pre-loaded SLMs, ready to assist the user out of the box without an internet connection.

As we look ahead, the “Edge AI” era promises to democratize intelligence, putting powerful, private, and free agents into the hands of everyone.